Home Network Mission Control, Phase 1.1: Two Bugs Past the Goal Line

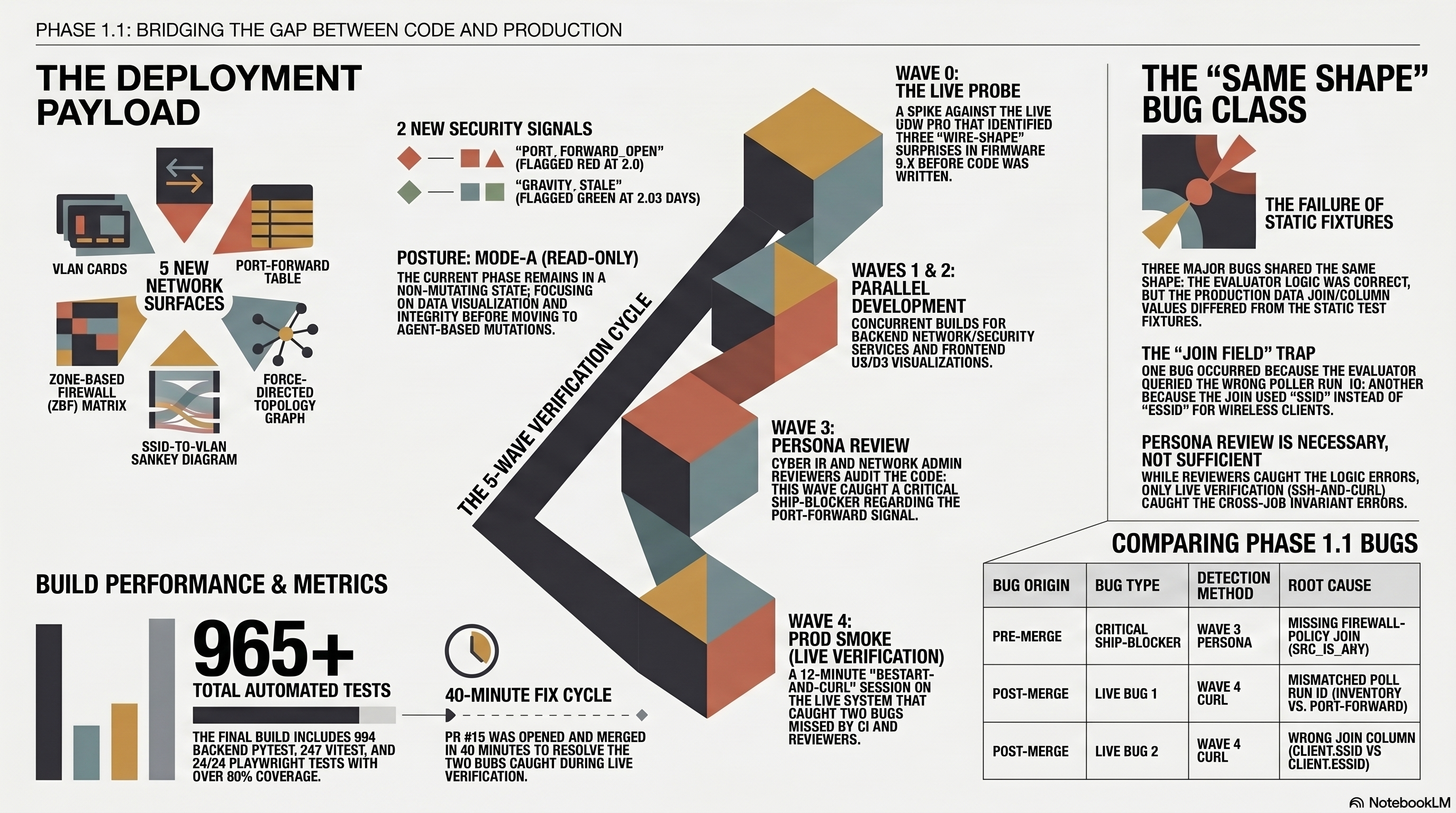

Part 5 of the home network dashboard build. Phase 1.1 ships the Network tab, five new surfaces, two new security signals, and 694 backend tests at merge. A 5-wave persona team caught a critical ship-blocker before merge, then in-session live verification on the deployed dashboard caught two more bugs of the exact same shape: wrong join field, silent zero, fixtures encoded the same wrong assumption. The marquee lesson is that persona-team review is necessary but not sufficient. The second line of defense is running the live system in-session, not waiting for a scheduled probe to find drift.

Phase 1.1 of the Home Network Mission Control Dashboard shipped on Monday. Five new surfaces on a fresh /network tab. Two new security signals. 694 backend pytest, 247 Vitest, 24/24 Playwright at the close. PR #14 for the feature, PR #15 the next day for the two bugs the live system caught after the merge celebration. One Wave 0 probe at the start that turned up three concrete UDM v9+ surprises before any code got written. One persona-team dispatch in the middle that caught a CRITICAL ship-blocker on the marquee security signal. One restart-and-curl session at the end that caught two MORE bugs of the same class as the ship-blocker, on different surfaces.

That's the build report. The interesting part of this post is the same shape it was last week: the gap between "tests pass" and "production works." Phase 1.4 in V2 paid that gap five times in one Sunday. Phase 1.1 paid it twice in one Monday. The bug class is identical: the test asserts the right column, the test passes, the feature works against the fixture, and production has been running on empty data because the join field was wrong. The persona team caught one of these. The persona team missed two more. The two it missed were caught by running the live system in the same session as the merge, not by trusting the scheduled probe to surface drift in eight days.

Series Context

This is part 5 of the Home Network Mission Control series. Direct prerequisites:

- Phase 1: the chassis, 12 workstreams, four enrichment waves, mode-A read-only design, 497 backend tests at the end.

- Phase 2: the cyberpunk re-skin, the four-persona reviewer team, 18 findings, 5 same-session fixes.

- Phase 3: Feature 1 (DNS click-throughs) shipped via a re-runnable plan doc that two different agentic CLIs took turns on.

- Phase 4 (V2): the Threat Intel tab and the post-merge audit that caught five bugs CI never saw.

Phase 1.1 here lands the Network tab inside the cyberpunk shell, lights two new security signals, and gets bitten twice by the same bug class V2 was supposed to teach me to look for.

Open the companion deck: slide-01 through slide-13. Infographic in the Wave 0 section below.

What Phase 1.1 Actually Ships#

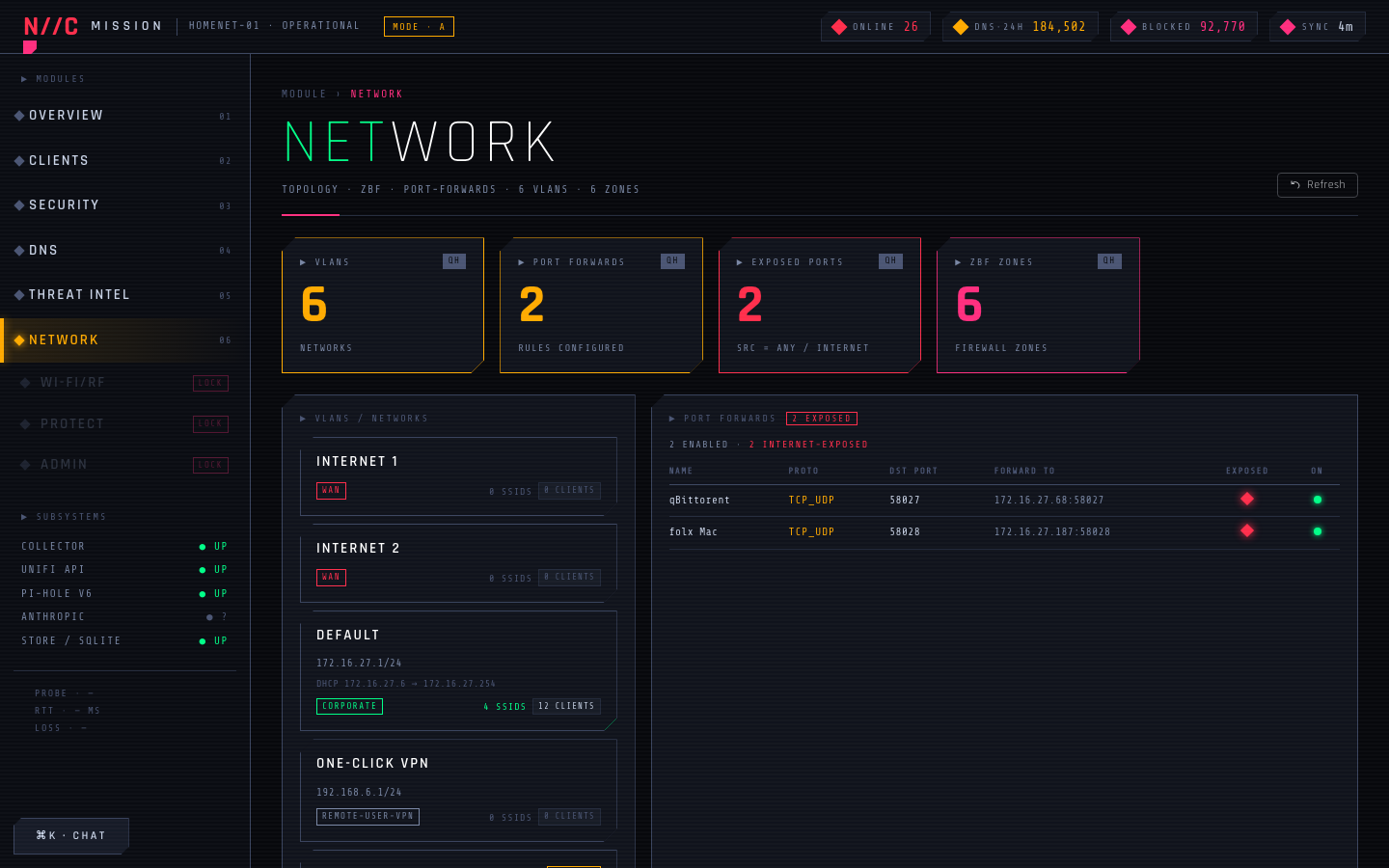

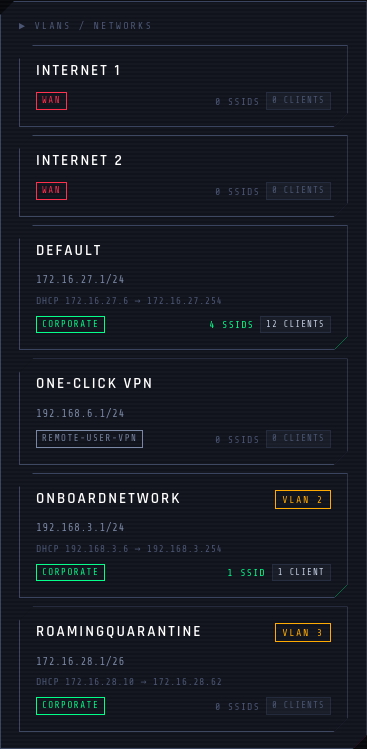

Five surfaces on one new tab, all fed by the same nightly UniFi inventory poll plus a dedicated poll_port_forwards job for the rule table.

- VLAN cards at the top of the page, one per network. Subnet, gateway, DHCP range, IGMP snooping flag, and a live client-count chip pulled from the same client inventory that drives

/clients. - Port-forward table. Every NAT rule from UniFi's

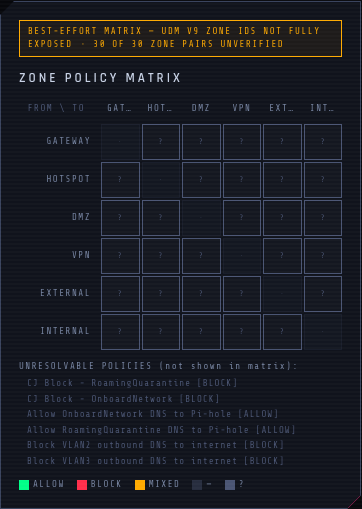

/proxy/network/api/s/{site}/rest/portforward, normalized into(name, dst_port, fwd_ip, fwd_port, src_is_any, enabled). Thesrc_is_anycolumn drives the new security signal. - ZBF zone matrix. Zone-based firewall policies summarized as a from-zone by to-zone grid, with a colored cell per zone pair indicating whether traffic is allowed by some policy, blocked by some policy, or unverifiable from the wire shape we have.

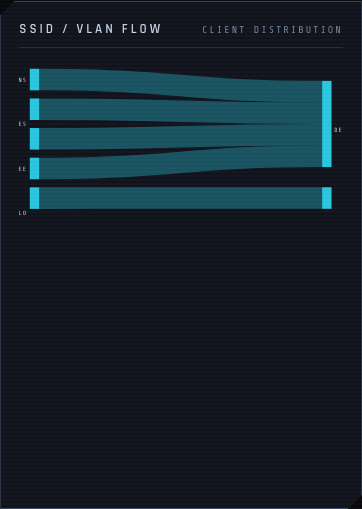

- SSID to VLAN Sankey. Wireless SSIDs flowing into VLANs flowing into client counts. D3 Sankey, click-to-zoom on the page.

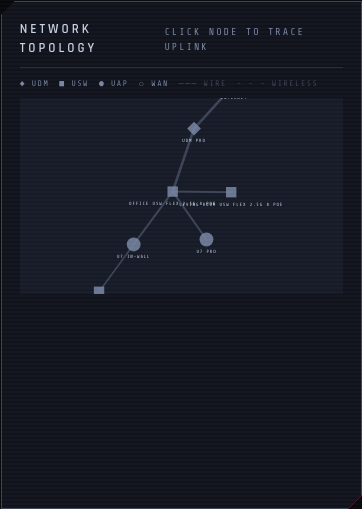

- Topology graph. UniFi's

get_topology()collapsed into a force-directed graph: gateway, switches, access points, and the client devices attached to them.

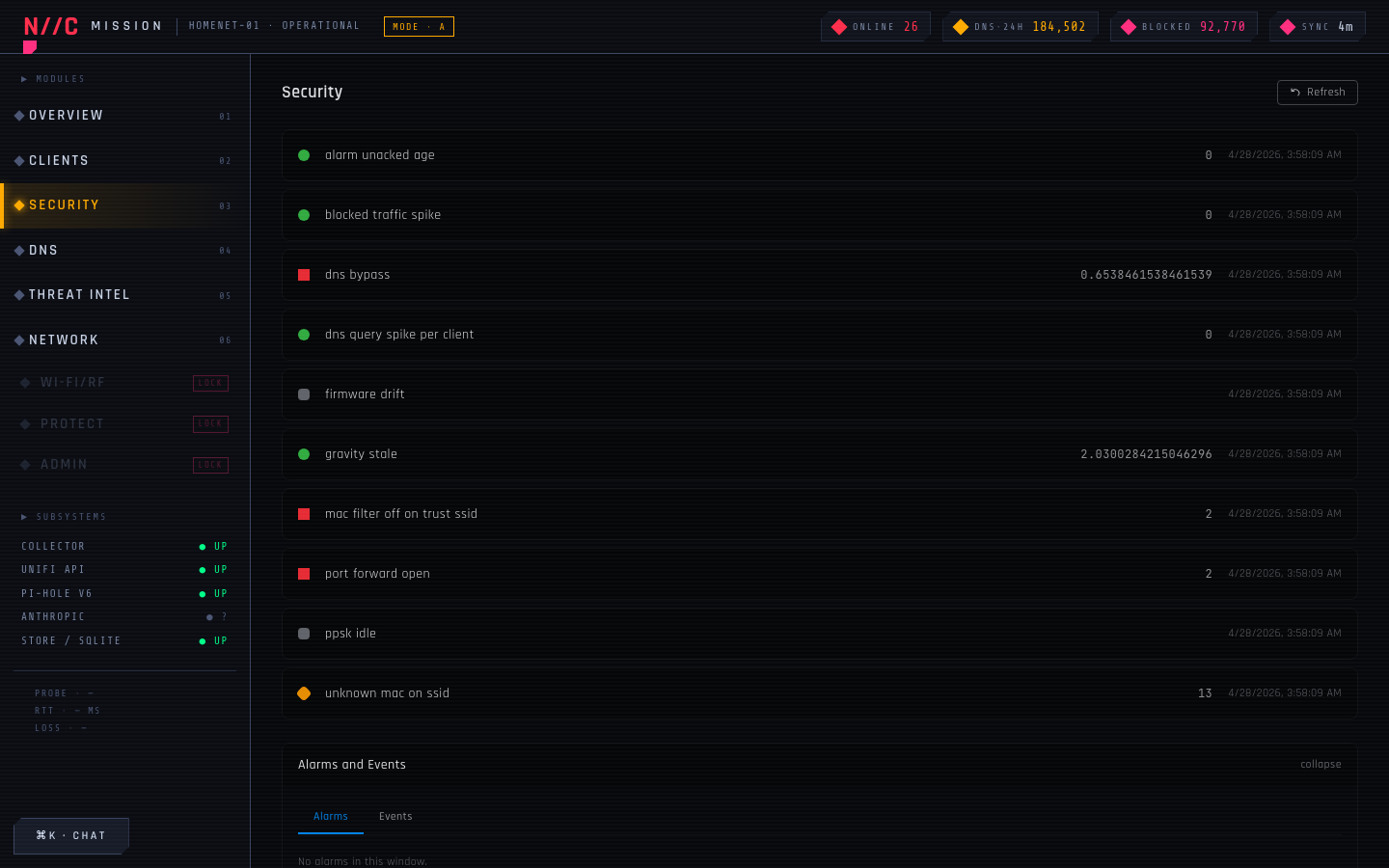

Two new security signals lit at the same time:

| Signal | Heuristic | First-run state on my LAN |

|---|---|---|

| port_forward_open | NAT rule with src_is_any = true AND enabled = true | RED, value 2.0 (qBittorrent + folx Mac flagged) |

| gravity_stale | Days since last successful Pi-hole gravity update | GREEN, 2.03 days |

The signals plug into the existing security panel from Phase 1. Same evaluator pattern, same red/amber/green semantics, same latest_completed_poll_run() helper. That last one is the load-bearing piece. Hold that thought.

Wave 0: The Probe That Found Three UDM v9+ Surprises#

Before any code, I ran a Wave 0 spike against the live UDM Pro through the UniFi MCP. The job: ask the MCP for the exact wire shape of every endpoint Phase 1.1 was going to depend on. The point of the probe was to catch wire-format drift before the persona team got involved. UniFi shipped firmware 9.x last quarter. My fixtures were recorded against 8.x.

The probe came back with three concrete surprises.

Surprise one: the src field is gone from portforward. The /proxy/network/api/s/{site}/rest/portforward endpoint used to return a src field per rule containing either "any" or a CIDR. UDM 9.x dropped it entirely. The raw REST layer no longer ships it; the UniFi MCP's tool surface had been silently translating src=null to "any" for back-compat. Source restriction migrated to ZBF policies named Allow Port Forward {name}, separately stored under /proxy/network/api/s/{site}/rest/firewallpolicy. If I wanted to know whether a port-forward was actually open to the internet, I had to look at two endpoints, not one.

Surprise two: ZBF policies have empty zone-id fields. A ZBF policy returns source_zone_id: "" and destination_zone_id: "" instead of UUIDs that match list_zbf_zones. The actual zone pair is encoded in the policy's compound id field, which is two MongoDB ObjectId fragments concatenated with a separator. Those fragments do NOT match the UUID zone IDs from the zones endpoint. Two different ID spaces. The matrix can't reliably display every zone pair as a single color, because the server never told me which pair this policy applies to.

Surprise three: the topology has a phantom WAN MAC. get_topology() returns a list of nodes (gateway, switches, APs, clients) plus a list of edges. One edge consistently references a MAC that's not in the node list: the upstream gateway uplink. The renderer chokes on this because the D3 force layout can't draw an edge to a node that doesn't exist. I had to insert a synthetic "wan" node so the graph wouldn't error.

Wave 0 wrote three findings into the slice plan before any code got written. Each finding turned into an explicit decision in Phase 1.1's design. The port-forward table joins the rule list with a separate query against firewall policies named Allow Port Forward *. The zone matrix renders unverifiable cells with a ? glyph and a footer disclaimer instead of guessing. The topology renderer inserts a synthetic WAN node before passing the graph to D3. None of those decisions would have been visible from a static fixture review. The probe surfaced them by hitting the live API.

Wave 0 against the live API beats fixture-review for wire-format drift

A persona team reading static fixtures asks "is the design correct against this fixture." A Wave 0 probe against the live API asks "did the upstream change shape since the fixture was recorded." Those are different questions. UniFi 9.x shipped four months ago. My fixtures were 8.x. The probe took fifteen minutes and saved me from designing the port-forward security signal against an src field that no longer exists.

The 5-Wave Persona Team Pattern#

The dispatch shape Phase 1.1 used was the same five-wave structure I've been refining since Phase 2: a Wave 0 probe against the live system, two backend waves in parallel, two frontend waves in parallel, two reviewer waves in parallel, and a Wave 4 prod smoke. The captain (me) integrates findings between waves and serializes the slice menu.

Wave 0 produced three findings. Wave 1 produced clean backend slices because the wire-shape decisions were already made. Wave 2 produced two frontend slices, one for tables and stat panels, one for D3 visualizations, since those have totally different testing characteristics. Wave 3 produced eight pre-merge findings, one of them the CRITICAL ship-blocker I want to walk through because it's the bug that maps onto the two follow-up bugs the prod smoke caught.

Wave 3 Catches the Ship-Blocker#

The Cyber IR analyst reviewer ran against the unmerged port_forward_open evaluator. The reviewer's read was: "this signal is going to ship green on a system that has open port forwards." That's a bad signal. A red signal that's actually green is operationally honest because the operator looks at it and decides "no action needed." A green signal that's actually red is operationally lying.

The reviewer's reasoning: the evaluator was checking src_is_any = true, but the column population was wrong. The Wave 0 probe had said the src field's gone from UDM 9.x; the column population was supposed to read the firewall-policy join (the Allow Port Forward {name} rules) and infer src_is_any from whether the source was the WAN or Internet zone. The implementation skipped that join entirely. The poller wrote src_is_any = false on every row regardless. Every row passed the src_is_any = true filter. Zero rows matched. Signal evaluated to "no exposed forwards", returned green.

The reviewer caught it because the persona's job description was "look at this from a defender's seat." A defender doesn't trust a green status without checking the population logic. A test would not have caught this, because the test fixtures populated src_is_any = true directly, then asserted the signal evaluates red. The fixture was right against the test logic; the production poller was wrong against the wire shape; both ran against the same column without ever meeting.

The fix landed before merge: the poller pulls firewall policies in parallel with the port-forward list, joins them on the Allow Port Forward {name} convention, and infers src_is_any from whether the policy's source zone resolves to the internet-facing zone. Three regression tests guarding the inference. Wave 3 caught one bug. Eight findings total in that wave; one of them was a CRITICAL.

The Merge, the Restart, and the First Live Bug#

I merged PR #14 on Sunday night. Backend 692 tests, Vitest 246, Playwright 24/24. All four CI jobs green. The persona team caught the C-1 ship-blocker. The Wave 0 probe had de-risked the wire shape. The slice plan was followed. By every artifact I had access to, the feature shipped clean.

Then I restarted the backend on the Mac mini and curled /api/security/signals/latest. The port_forward_open signal evaluated GREEN with value 0.0. On the same LAN where qBittorrent and a folx Mac both have open NAT rules I haven't closed yet.

Same bug class as Wave 3. Different shape.

The evaluator was querying latest_completed_poll_run("poll_inventory") to find the freshest snapshot, then filtering port_forwards on that run_id. But port_forwards rows aren't written by poll_inventory. They're written by poll_port_forwards, a separate scheduled job with its own run_id sequence. The WHERE clause matched zero rows on every evaluation, no matter how many open port forwards lived on my LAN. The evaluator's "no rows = green" branch fired every time.

This is the same bug shape as Wave 3's C-1: the column was right, the join was wrong, the test passed because the fixture wrote the right run_id directly. In production, with two separate pollers feeding the same evaluator, the join was empty. Silent zero. Green status.

The fix was one parameter on latest_completed_poll_run(): pass "poll_port_forwards" instead of "poll_inventory". One character at the call site. Plus a regression test that runs both pollers against fixtures with different run_id sequences and asserts the evaluator finds the port-forward rows from the correct job.

The Second Live Bug, Same Shape, Different Surface#

I curled /api/network/vlans and the response was correct except for one column: client_count was zero on every VLAN. My LAN has 30+ clients. None of them were attaching to a VLAN, according to the response.

The implementation joined Client.ssid against Wlan.ssid to get clients-per-WLAN, then joined WLAN to VLAN through the network mapping. Reasonable design. Wrong join. The Client.ssid column on UniFi clients is populated with the network NAME ("Default", "OnboardNetwork"), not the wireless SSID name. The wireless SSID lives in Client.essid. The join between Client.ssid and Wlan.ssid matched zero rows because nothing populated either side with the same data.

The test for this surface used a fixture where both Client.ssid and Wlan.ssid were set to the same string. The fixture was wrong in the same way the production poller was right. Same trap as the v5/v6 fixture trap from Phase 4. The test passed; production returned zero.

The fix joined Client.essid (wireless SSID) against Wlan.essid, plus a fallback path for wired clients that joins on the network ID. The fixture for the regression test was rebuilt from a live UniFi snapshot, so the bug shape can't recur in CI without first surfacing in a real wire response.

Both fixes shipped in PR #15 the next morning. One commit message, two regression tests, one updated fixture, two flipped column references. PR #15 was open for forty minutes.

Why the Two Bugs Have the Same Shape as Wave 3's C-1#

Step back from the three bugs. They share one feature: the evaluator was correct, the surrounding system was the bug.

- Wave 3 C-1 (caught pre-merge): the evaluator filtered on

src_is_any = true; the poller wrotesrc_is_any = falsebecause the firewall-policy join was missing. - Live bug 1 (caught post-merge): the evaluator filtered on

poll_run_id = latest("poll_inventory"); the rows were tagged withlatest("poll_port_forwards"). - Live bug 2 (caught post-merge): the join filtered on

Client.ssid = Wlan.ssid; the column with the wireless SSID isClient.essid, notClient.ssid.

In every case the evaluator was reading the right column with the right operator. In every case the value space of the column in production didn't overlap with the value space in the fixture. CI can't catch this class of bug because CI runs against the recorded test inputs, not against the live system. Wave 3 caught the first one because the persona reviewer asked "would this evaluate honestly against a real LAN" instead of "does the test pass." That question doesn't scale to every cross-job, cross-table, cross-column join in the codebase. Two more passed Wave 3 unflagged, and only the live system caught them.

Persona-team review is necessary, not sufficient

The 5-wave persona team caught one CRITICAL pre-merge. Eight findings total, one of them load-bearing. That's exactly what reviewers are good at: lensed pre-merge questions on focused diff. The two bugs they missed were both cross-job invariants: a poller and an evaluator that disagreed on which run_id sequence was canonical, and a client-record column that got populated by the upstream API in a way the developer didn't expect. Neither is visible inside the diff of a single PR. Both were visible in fifteen seconds of curl against the running backend. The lesson isn't "add another persona reviewer." The lesson is that persona review is a layer; live verification is a different layer; you need both.

What "Live Verification In-Session" Actually Looks Like#

The two follow-up bugs were caught in roughly twelve minutes. Here's the loop, exactly as it ran on Monday morning:

- SSH to the Mac mini.

launchctl kickstart -k gui/$(id -u)/com.cryptoflex.homenet-dashboard.backend. The backend reloads with the merged code. The frontend reloads next time I open the browser.curl http://localhost:8000/api/security/signals/latest | jq '.signals[] | select(.name == "port_forward_open")'. Read the value. Compare against my expectation.curl http://localhost:8000/api/network/vlans | jq '.[] | {name, client_count}'. Read the values. Compare against my expectation.- Browser-tab the live dashboard. Click the Network tab. Click the Security tab. Look for the surfaces that were supposed to light up.

- For each disagreement between expected and actual, write a regression test, then write the fix.

That's the whole protocol. No specialized tool. No agent dispatch. Just an SSH session and curl with jq. The first two steps cost about twenty seconds. The next four cost eleven minutes. Twelve minutes of live verification caught two bugs the persona team missed.

The reason this isn't already automatic in my CI is that CI doesn't have a UDM Pro and a Pi-hole on the test runner. It's just got fixtures. The fixtures will, by definition, reflect what the developer thought the wire shape was at the moment the fixture was recorded. If the fixture is wrong in the same way the production poller is right, the test passes and the bug ships. The only place the right shape lives is the live system. The only way to read the live system is to talk to it.

The Local-vs-CI Tool Skew#

A side-quest from this session worth surfacing: my local toolchain bit me three times in one afternoon, all from version drift between my dev machine and CI's freshly-resolved versions.

ruff lintSIM103. A new lint rule landed in ruff between my local pin and CI's resolution. CI failed on aif x: return True else: return Falsepattern that my local lint had passed. Fix: refactor toreturn bool(x). The CI lint isn't more correct than my local lint; it's just newer, and that's enough.ruff format. Two files had different formatting decisions between my local cache and CI's resolution. Same shape as the Phase 3 Codex bug: the PR was lint-clean locally and lint-red on CI, because the local version was older.prettier. One frontend file had a trailing-comma decision that differed between local and CI prettier. CI failed; local was happy.

I resolved this the boring way: there's a make lint target in the Makefile that explicitly mirrors CI's resolution by running with --no-cache and pinning to the latest matching minor. Running that target before pushing catches the skew before CI does. The actual fix is to pin the toolchain versions hard in pyproject.toml and package.json, but that's a future cleanup. For now, the protocol is "run make lint before push", same shape as "curl the API after merge".

Mirror CI's resolution locally before pushing

Local cached toolchains drift from CI's freshly-resolved versions. The cheapest fix is a single make lint target that runs your linters with the same flags CI uses, including --no-cache if your linter has one. The cost is one Makefile target. The benefit is catching version skew before CI blocks the merge. Treat your local lint as a hint and your CI lint as the source of truth, then close the gap with a Makefile target you actually run.

The Numbers At Merge#

Phase 1.1 closed Monday afternoon at:

- PRs merged: 2. PR #14 (the feature, 27 files changed, +2,103 −317), PR #15 (the live-verification fixes, 6 files changed, +89 −12).

- Tests at the close: 694 backend pytest, 247 Vitest, 24 Playwright. Coverage above the 80% floor. Backend (3.12), Frontend (Node 20), E2E, fixture-drift all green at the close.

- New surfaces: 5 (VLAN cards, port-forward table, ZBF zone matrix, SSID-to-VLAN Sankey, topology graph).

- New security signals: 2 (

port_forward_open,gravity_stale). First-run state on my LAN:port_forward_openRED at 2.0 (qBittorrent + folx Mac),gravity_staleGREEN at 2.03 days. - Persona-team findings: 8 in Wave 3, of which 1 was CRITICAL (port_forward_open population bug), 4 were medium (zone-matrix glyph styling, topology renderer error handling, two stat-panel copy fixes), and 3 were nice-to-have.

- Post-merge bugs caught by live verification: 2 (poll-run-id mismatch on the security evaluator, SSID column mismatch on the VLAN client-count join). Both fixed in PR #15 the next morning.

- Mode posture: still A. The Network tab is read-only. No mutations on any of the new surfaces. The security signals are evaluator-only. Phase 1.1 doesn't touch the agent surface.

Patterns Worth Carrying Forward (Phase 1.1 Edition)#

- Wave 0 against the live API beats fixture review. Fifteen minutes of probing UDM 9.x produced three concrete findings before any code got written. Fixtures freeze at the moment of recording. The live API has changed shape four times since then. If your dashboard depends on an upstream that ships, every slice should start with a Wave 0 probe.

- Persona-team review is a layer, not the whole stack. The 5-wave dispatch caught one CRITICAL pre-merge. It missed two more bugs of the same class. The persona-team layer is necessary because lensed pre-merge questions catch a class of bugs no single reviewer would. It's not sufficient because cross-job invariants don't surface inside a single diff.

- Live verification belongs in the same session as the merge. Twelve minutes of SSH-and-curl caught two bugs that no green CI run could see. The protocol is boring on purpose: kick the backend, curl the new endpoints, browser-tab the new surfaces, write a regression test for every disagreement. Make it cheap. Make it fast. Make it the last thing you do before declaring done.

- A schedule probe is a backstop, not a goalkeeper. A scheduled remote agent at 2026-05-05 will probe the same surface in eight days as a recurring backstop. Eight days is too late if the dashboard's been running on a silently-zero security signal that whole time. The schedule catches drift after-the-fact. The merge-day verification catches it now.

- Add a "Wave 5: prod smoke" step to the persona-team template. My current template runs Wave 0 (probe) through Wave 4 (integration). A formal Wave 5 step that says "kick the backend, curl the endpoints, screenshot the new surfaces" would have added twelve minutes to the Phase 1.1 dispatch and saved one PR. Updating the template is the cheapest possible mitigation.

- Mirror CI's tool resolution locally. A

make linttarget that runs the linters with the same flags CI uses catches version skew in seconds. The gap between local cache and CI freshly-resolved bit me three times in one session. One Makefile target makes it stop being a bug class.

What's Next: The Roadmap From Here#

| Phase | What ships | Status |

|---|---|---|

| F2.2 false-positive review | Phase 4 follow-up: sample 20 anomalies against +7 days of real data; promote H3/H5 to weight 5/4 if FP profile holds. | Scheduled remote agent fires 2026-05-04 13:00 UTC. |

| Phase 1.1 backstop probe | Recurring scheduled agent re-runs the live probe against the new Network surfaces, looking for wire-shape drift on UDM updates. | Routine trig_01PF8mHj95xBioJcHaMcyV1X armed for 2026-05-05T14:00 UTC. |

| F2.5 score promotion | Phase 4 follow-up: flip H3 and H5 weights from 0 to 5 and 4 if the +14d FP review confirms low noise. | Scheduled remote agent fires 2026-05-11 13:00 UTC. |

| Phase 1.2 (Wi-Fi + RF) | The Wi-Fi tab. Needs the UniFi session-auth follow-up first. | Visible-but-locked in the cyber SideNav. |

| Phase B (A → B) | Mutation unlock. The agent surface stays read-only. PII gate on RecentMutation.session_id lands. | MISSION_CONTROL_MODE env flip + security review. |

| Phase C (B → C) | Agent unlocked in read-only posture. | All Phase 1 plumbing already in place. |

| Phase D (C → D) | Agent drafts mutations, human approves. End state of the four-mode flag. | End state. |

The two scheduled agent runs from Phase 4 carry forward unchanged. Phase 1.1 adds one new recurring agent: a backstop probe that runs the same Wave 0 protocol against the live UDM every week. If the wire shape changes again, the probe surfaces it before a fixture-frozen test passes against an upstream that's moved on.

Phase 1.1 of the dashboard does what Phase 1 did, plus a Network tab that surfaces five new investigation surfaces and two new security signals. It also surfaced two bugs that no green CI run could see, this time inside twelve minutes of merge instead of three days. The marquee feature is the tab. The marquee lesson is that the bug class V2 found is a bug class, not a fluke. Persona review caught one this round. Live verification caught the other two. Both happened in the same session as the merge. Next slice's persona-team template already has Wave 5: prod smoke baked in.

If you've followed this thread from the UniFi MCP, Pi-hole MCP, and /homenet-document posts through to Phase 1, Phase 2, Phase 3, and Phase 4, the through-line is the same: every post is one more reusable primitive on top of the last. Phase 1.1's primitive is the in-session prod smoke. Build the feature, dispatch the persona team, ship the merge, then SSH-and-curl before you walk away from the keyboard. The dashboard tells the truth about my LAN. The smoke makes sure the dashboard tells the truth about itself.

Comments

Subscribers only — enter your subscriber email to comment